Project Detail

Bilingual Ceremony Script Generator (NotebookLM Collaboration)

Produced grounded Japanese/English ceremony scripts with strong style control and iterative refinement.

Executive Summary

Problem

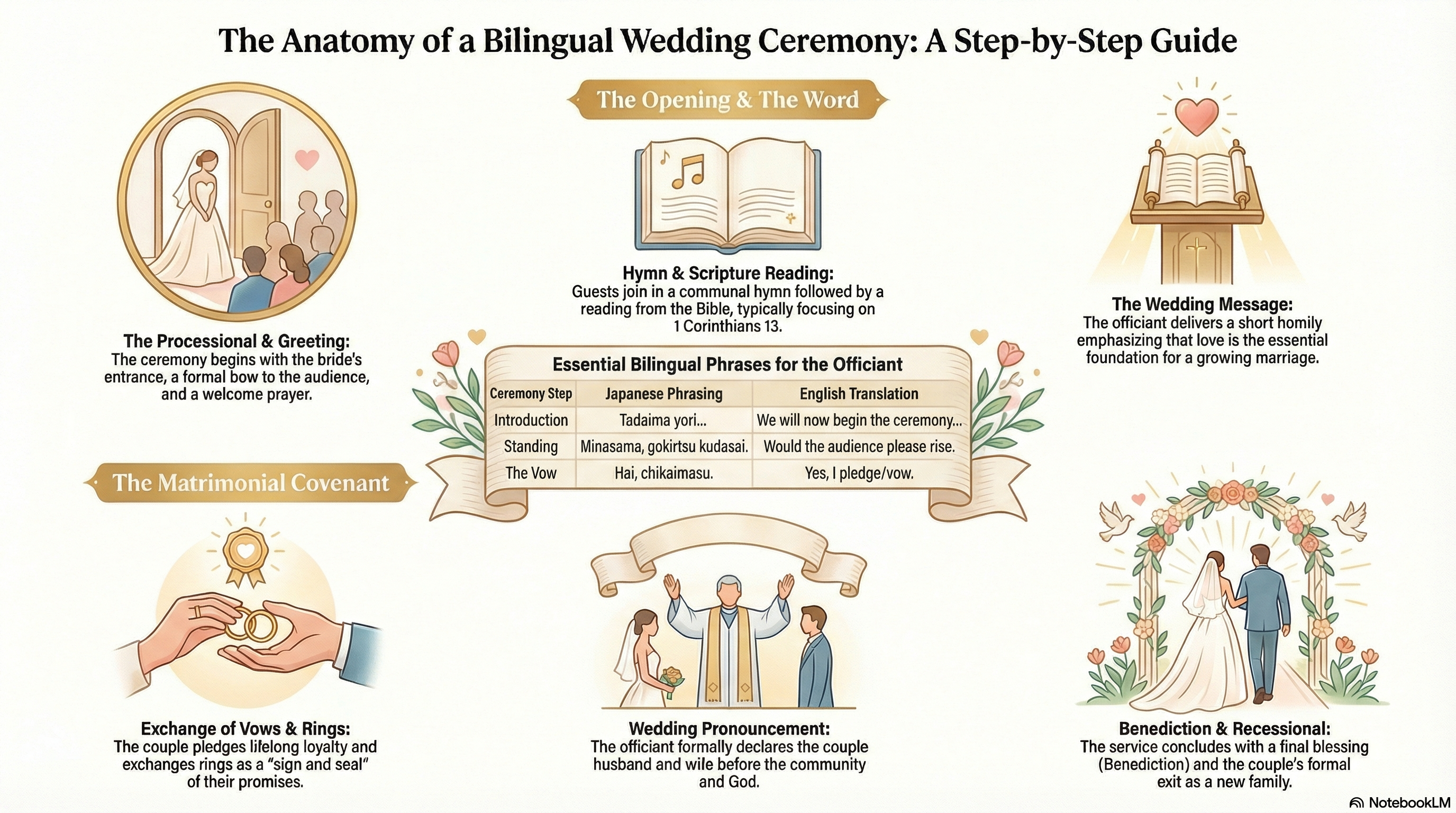

Ceremony script drafting required fast bilingual output while preserving tone and consistency with prior successful ceremonies.

Solution

Built a grounded generation process in NotebookLM, anchored to 10 prior scripts, then iterated with human review to tune style and bilingual fluency.

Outcome

Delivered tailored Japanese/English ceremony drafts aligned to the Ancient Grecian theme with improved consistency and editorial efficiency.

Use Case & Stakeholders

Content planners needed high-quality bilingual scripts without sacrificing authenticity, ceremony flow, or stylistic coherence.

- Event planning leads

- Ceremony script reviewers

- Bilingual MC and production teams

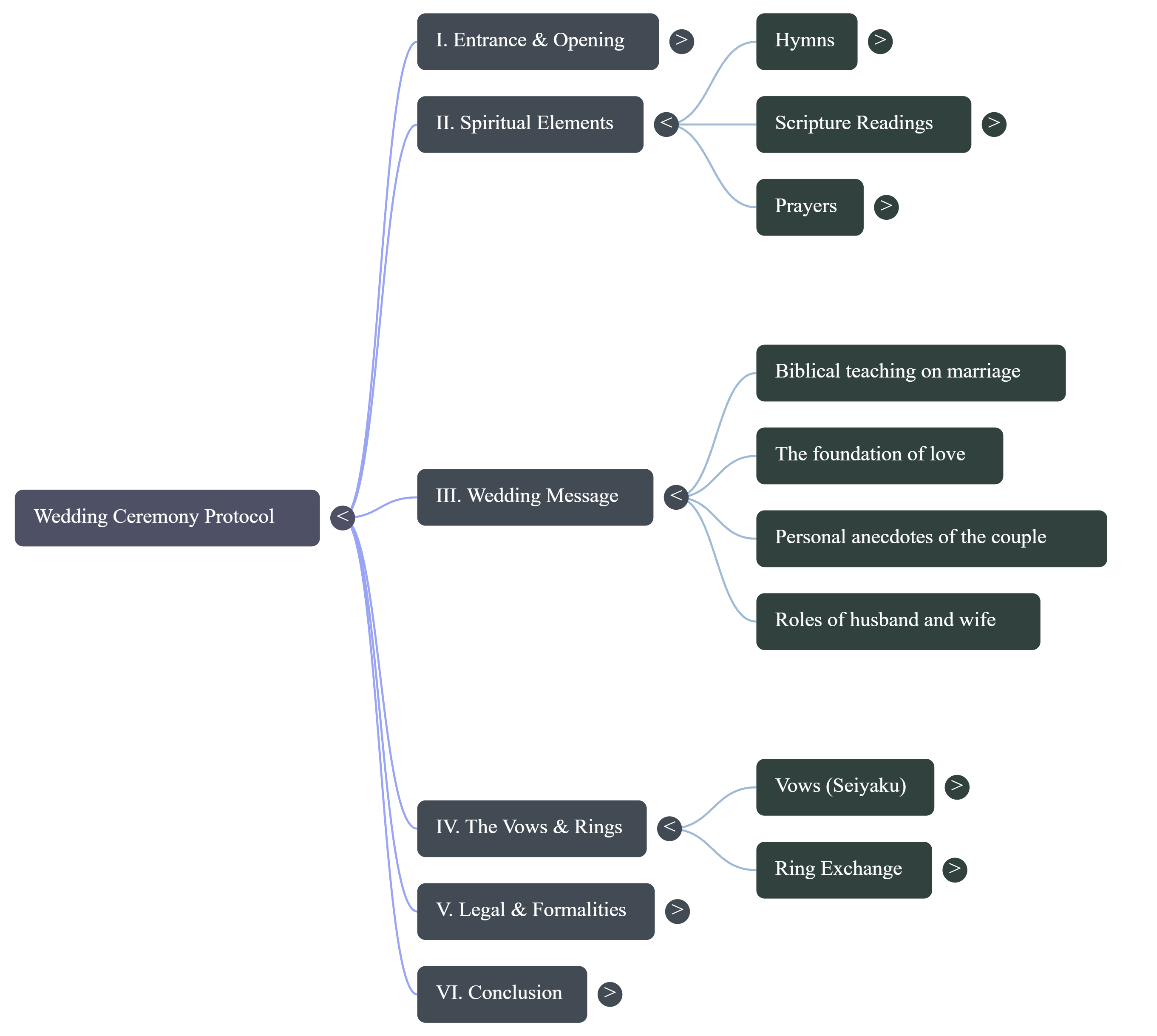

Architecture

Prior script corpus is used as grounding context, a themed prompt controls tone and structure, and reviewers iterate until final script acceptance.

[10 Prior Script Documents]

|

Grounding + style constraints

|

NotebookLM generation pass

|

Human review and edit loop

|

Final bilingual ceremony script

Tech Details

RAG / Prompt Patterns

- Grounding prompts that cite prior script motifs and structure.

- Style-control blocks for theme, pace, and ceremonial language.

- Iterative critique prompts to tighten bilingual consistency.

Tools

- NotebookLM collaborative workspace

- Prompt templates for style and grounding checks

- Manual editorial checkpoints for quality assurance

Constraints

- Need to preserve nuance across Japanese and English.

- Theme-specific language can drift without tight constraints.

- Output quality depends on source corpus relevance.

Tradeoffs

- Strong style constraints improve consistency but can reduce creative variation.

- Faster generation cycles can increase reviewer burden if grounding is weak.

Screenshots Gallery

Lessons Learned / What I'd Improve Next

Lessons Learned

- Grounding quality matters more than prompt verbosity.

- Side-by-side bilingual checks catch tone drift early.

- Human review cadence should be planned as part of the generation workflow.

What I'd Improve Next

- Add terminology guardrails for vow and ceremonial phrasing consistency.

- Add rubric-based scoring before reviewer handoff.

- Add versioned prompt packs by ceremony style.

Repro Notes / Demo Walkthrough

Non-runnable public demo: use the walkthrough steps below.

- Load a curated corpus of prior scripts and define style constraints.

- Run initial grounded generation and compare outputs by prompt variant.

- Review and revise with the bride and groom regularly.